HPC Documentation

HPC Documentation

THIS SITE IS DEPRECATED

We have transitioned to another service and are no longer actively updating this site.

Refer to our new documentation at: hpcdocs.hpc.arizona.edu

Our Mission

UA High Performance Computing (HPC) is an interdisciplinary research center focused on facilitating research and discoveries that advance science and technology. We deploy and operate advanced computing and data resources for the research activities of students, faculty, and staff at the University of Arizona. We also provide consulting, technical documentation, and training to support our users.

This site is divided into sections that describe the High Performance Computing (HPC) resources that are available, how to use them, and the rules for use.

- User Guide — This section has the basic knowledge that will introduce you to the resources and provides information on account registration, system access, how to run jobs, and how to request help.

- Resources — Detailed information on compute, storage, software, grant, data center, and external (XSEDE, CyVerse, etc.) resources.

- Training — Workshop

- Policies — Policies related to topics that include acceptable use, access, acknowledgements, buy-in, instruction, maintenance, and special projects.

- Results — A list of research publications that utilized UArizona's HPC system resources.

- FAQ — A collection of frequently asked questions and their solutions.

- Secure HPC

- User Portal — Manage and create groups, request rental storage, manage delegates, delete your account, and submit special project requests.

- Open OnDemand — Graphical interface for accessing HPC and applications.

- Job Examples — View and download sample SLURM jobs from our GitHub site.

- Training Videos — Visit our YouTube channel for instructional videos, researcher stories, and more.

- Getting Help — Request help from our team.

Highlighted Research

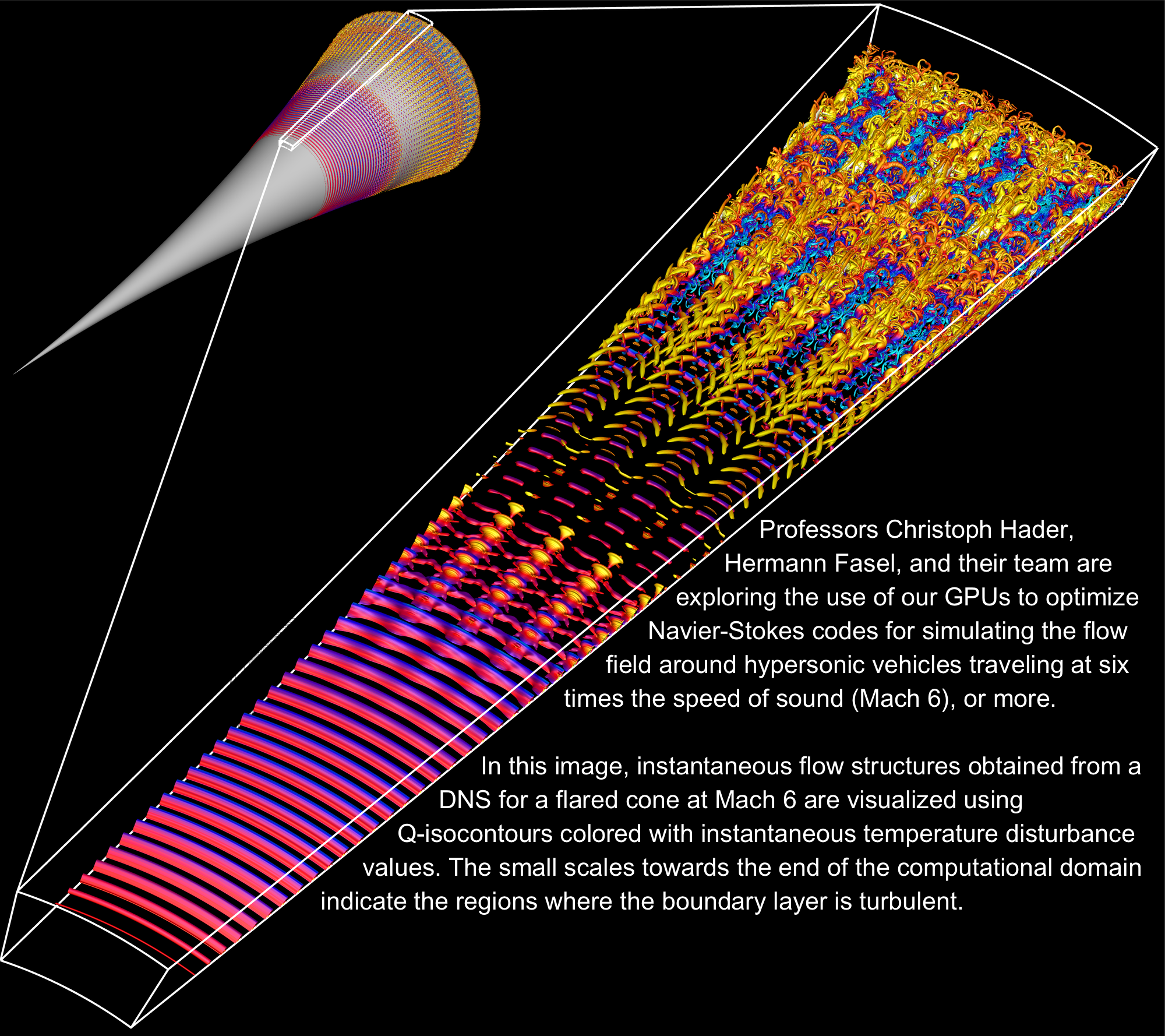

Faster Speeds Need Faster Computation - Hypersonic Travel

Quick News

Increased Time AllocationsBeginning on March 1st, 2024, the monthly allocation for each group was increased to 150,000 CPU Hours on Puma (previously 100,000), and 100,000 CPU hours on Ocelote (previously 70,000). | |

Faster Interactive SessionsAre you frustrated waiting for slow interactive sessions to start? Try using the standard queue on ElGato. We have provisioned 44 nodes to only accept the standard queue to facilitate faster connections. To access a session, try: For more information on interactive sessions see our page: Running Jobs With SLURM. | |

Singularity is Now ApptainerSingularity has been renamed Apptainer as the project is brought into the Linux Foundation. An alias exists so that you can continue to invoke We only keep a reasonably current version of Apptainer. Prior versions are removed since only the latest one is considered secure. Apptainer is installed on all of the system's compute nodes and can be accessed without using a module. |

Calendars

| Date | Event |

|---|---|

| Electrical maintenance is scheduled from 6AM to 12PM on October 3rd. ElGato will be unavailable during this period. |

| Quarterly maintenance is scheduled from 6AM to 6PM on July 26th. |

| Maintenance downtime is scheduled from 6AM to 6PM on April 26th for ALL HPC services. |

| Maintenance downtime is scheduled from 6AM to 6PM on January 25 for ALL HPC services |

| Workshop | Date | Time | Location | Registration | Details |

|---|---|---|---|---|---|

| Nvidia GPU's with Python | October 27, 2023 | 10-12 Noon | Virtual | Qualtrics | |

| Matlab: Parallel Computing | February 15, 2024 | 10-12 Noon | In person | Registration | Details |

| Matlab: Deep Learning | February 16, 2024 | 10-12 Noon | In person | Registration | Details |

| Intro to HPC | March 1, 2024 | 10-1130 AM | Main Library CATalyst B201 | Registration | Details |

| Data Management on HPC | March 6, 2024 | 10-1130 AM | Virtual | Registration | Details |

| Parallel Computing on HPC | March 14, 2024 | 10-11 AM | Main Library CATalyst B252 | Registration | Details |

| Visualization on HPC | March 14, 2024 | 11-12 Noon | Main Library | Registration | Details |

| ML with Python on HPC | March 12, 2024 | 10-11 AM | Main Library CATalyst B254 | Registration | Details |

| ML with RStudio on HPC | March 12, 2024 | 11-12 Noon | Main Library CATalyst B254 | Registration | Details |